Story 2: Agentic Flow

On optimal conditions, the reflection loop, and the question that has no answer

The room still has no windows. The same four are gone — but the questions they left behind aren’t.

Not because they’re hidden. Time there simply doesn’t exist the way it does outside. It’s like a server room running processes nobody sees until they break.

Somewhere in Kranj, in a room with a large whiteboard, two experts sat — each deeply rooted in their own world.

One was Jaka — a specialist in LSP and Flow in the tradition of Csikszentmihályi, a member of The Flow Research Collective, someone who knows how to create the conditions in which people do their best work. The other was Aleš, a strategist and creator of AIVaaS™, AI Value as a Service, a methodology for systematic AI business transformation, always searching for ways to bring it to life.

Aleš picked up the marker and wrote on the whiteboard:

“Does Agentic Flow exist?”

The thesis was provocative.

“Flow is always biological,” Jaka said. “It’s grounded in hormones, neurotransmitters, the body. AI knows only zeros and ones.”

Aleš nodded — but then quietly added: “But what about the results of Flow? Focus, maximum output, engagement, satisfaction. When challenge meets capability. Isn’t that exactly what we’re looking for in agents?”

Silence.

And in that silence, this thought experiment was born.

* * *

What Flow Actually Is

Before we engage with agents, we owe Csikszentmihályi honesty. Flow was never just a metaphor. It was first defined as a psychological phenomenon — and later research began tracing its neurological correlates.

Three conditions for Flow

Flow occurs when three conditions align: skills are matched to the challenge, the goal is clear, and feedback is immediate and unambiguous.

The Neurochemical Cocktail

During Flow, the brain releases a cascade of neurochemicals: norepinephrine, dopamine, anandamide, serotonin, and endorphins — all five neurochemicals that enhance performance, both physically and mentally.

And here is the key point Jaka raised:

Flow is impossible without biology

No dopamine reward system. No endocannabinoid anandamide to widen lateral thinking. No hormones to suppress pain and stress.

An AI agent has none of this. No hormones. No body. No fear to overcome.

Jaka was right.

And that’s exactly why the question becomes more interesting.

* * *

Agentic Flow - A Definition

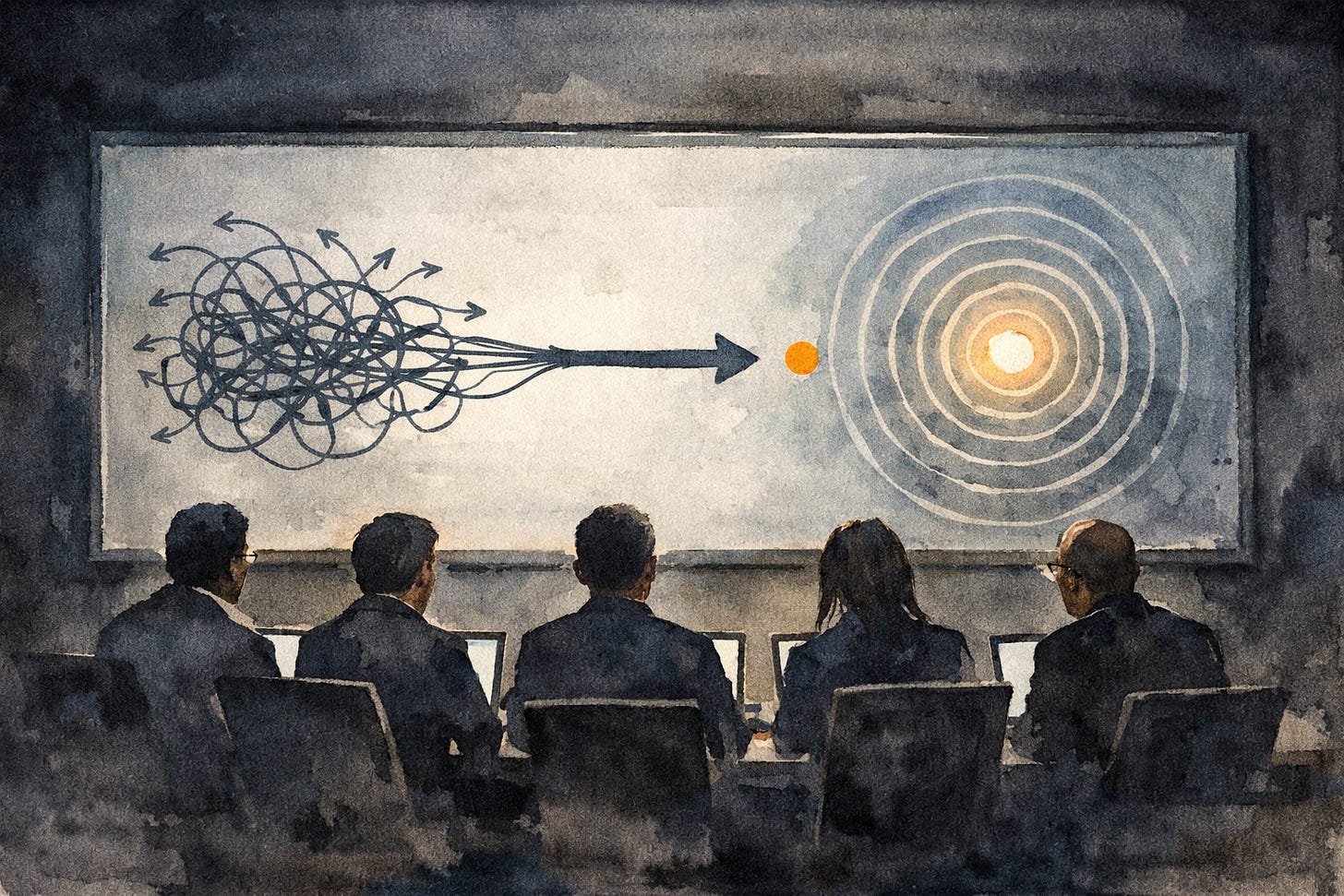

We’re not claiming AI agents experience Flow. We’re claiming that structural conditions exist which, in an agentic system, produce functionally equivalent results — not as experience, but as system output.

Agentic Flow is the state of an agentic system in which:

1. The task is optimally calibrated to the agent’s capabilities

2. Purpose is semantically clear: the agent operates within a consistent “business constitution”

3. The feedback loop is tight and fast: the system immediately knows whether it’s on track

4. Context is coherent: no conflicting signals, data is aligned

5. The system knows its limits: it escalates rather than hallucinates

6. Orchestration is invisible: agents pass context fluidly, without friction at the seams

The result of this state isn’t experience. It’s output — and that output is analogously close to what human Flow produces: focus, maximum effect, minimal overhead.

* * *

Six Conditions - Prompted

Condition 1: Optimal task calibration

Agents given tasks that are too simple “clone” — they produce variant outputs without real progress. Agents given tasks beyond their capabilities begin to confabulate — producing plausible but wrong outputs with complete confidence.

Condition 2: Semantic clarity of purpose

This is the “business constitution” we discussed in the first story. An agent cannot be in Flow if it doesn’t know what it’s acting in service of.

Condition 3: Tight feedback loop

Flow in humans requires immediate feedback. The same applies to agents. A system that waits hours for evaluation cannot self-correct. Feedback must be embedded in the loop itself. This is where the reflection loop enters: not as introspection, but as structured self-correction — generate, evaluate, revise.

Condition 4: Contextual coherence

Conflicting signals are the enemy of Flow, in humans and in agents. If the CRM says one thing and the instructions say another, the agent doesn’t freeze. It improvises. And improvisation without grounding is sophisticated guessing.

Condition 5: Clear capability boundaries

An agent in Flow knows what it knows — and what it doesn’t. The moment it begins to fill gaps with plausible invention rather than flagging uncertainty, it has left the Flow state. Escalation is not a failure. Hallucination is.

Condition 6: Invisible orchestration

The best orchestration is the kind you don’t notice. When agents pass context fluidly, when handoffs don’t create friction, when the system moves as a single coherent entity — that is the orchestration that approximates Flow.

* * *

The Question That Grew

We came in with one question on the board. We’re leaving with three.

The thesis that started it all was still on the board. And beneath it, three questions nobody could fully answer:

Can a system be in Flow if the humans operating it are not?

Is Agentic Flow a design goal — or an emergent property you can only recognize after the fact?

And if Flow requires surprise to be real — can a system that only samples probabilities ever truly be surprised?